by Mike Franchetti, SDI, Customer Insight Analyst

Some time ago a documentary was doing the rounds looking into the alarming habits of one of Britain’s most obsessive ‘hoarders’. Not without sad moments, we were welcomed into a house stacked from floor to ceiling with belongings ranging from collectables, the unlikely-to-be-useful, and common everyday rubbish. The poor soul had to crawl between two towers in order to reach his kitchen.

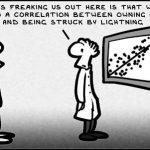

The process of ‘hoarding’ is not too dissimilar to present day data collection methods. We create billions of data each day and there has been a recent trend to grab as much of it as possible in order to make insightful business decisions. Software companies have responded accordingly, creating tools capable of handling such a volume and variety of data. However, comparatively little has been done to make identifying relevant and meaningful information an easy and efficient process. Employees working with data are tasked with wading through a swamp of information that includes data which should be collected, the unlikely-to-be-useful, and common everyday nonsense.

There are, of course, tricks to learn and tests to perform but the Big Data bandwagon comes not without issue. Most people would have a preference for less data of higher quality but, with the challenges associated in collection, Big Data sources are attractive alternatives.

Statistical analysis is often the last, and most vital, step taken by data handlers but it can be the most straightforward. Before reaching that stage, however, a whole host of preparation is needed and analysts are left longing for the days where datasets were of just the right size, packed with ‘nice’ numbers and easy-to-spot trends.

The smartphones in our pockets have capacities far, far greater than the computers NASA used in their Apollo missions – that alone says everything you need to know about the exponential growth in data and how Big Data is here to stay. Despite this, I believe in the next few years we will see an adjustment in focus and a rally cry for good, meaningful data. The change will be driven by the need for improved efficiency in data analytics, perhaps combined with changes to data protection laws. The benefits have the potential to be two-fold, consumers will be happier releasing less information and organisations will end up with refined datasets.

It makes sense for a retailer to know purchase histories but databases showing everything from pets’ names to latest Tweets can quickly become overbearing. Useful information can take longer to identify and patterns become harder to explain.

Our documentary hoarder would argue he has access to vast chunks of information and is capable of answering many questions. He also crawls through a hole to get to his kitchen – there must be a better way.

Service desks face similar challenges. Every week there is a call for a new metric to be recorded, one that will better define how we’re delivering value. If we were to track all of the performance measures recommended to us we’d by sitting on piles of data but with little direction. Our biggest problem as an industry, and highlighted in several pieces of SDI research, is a collective struggle to find methods of producing something meaningful with the data available. We need to improve how we collect and handle data, and start making it work for us.