Back to Resources

Back to Resources

Humanity is again creating a powerful technology that can do amazing things. In only the last few months, we’ve all witnessed the increasing role of AI across various industries. AI can automate data collection, email responses, undertake software testing, invoicing, customer service, and even help in content creation.

There’s no doubt about the positive impact AI already has on many businesses. But some risks should not be ignored! So, before we start relying (too much) on AI, shouldn’t we address some of the ethical issues of AI in the workplace? What are some ethical considerations when using generative AI?

Exploring the implementation of AI in the workplace is an urgent matter that requires our attention. So, in this blog, we’ll discuss the concerns surrounding AI and reflect on some important questions.

Let’s dive in!

Importance Of Addressing Ethical Issues Of AI

According to recent research conducted by the Prospect trade union, there is significant public backing for the regulation of AI. Based on a survey with more than 1,000 people, 58% agreed that the UK government should set rules around using generative AI (tools like ChatGPT or Google’s Bard) within the workplace to protect workers’ jobs.

Below are five concerns related to AI.

#1 The dilemma of job displacement

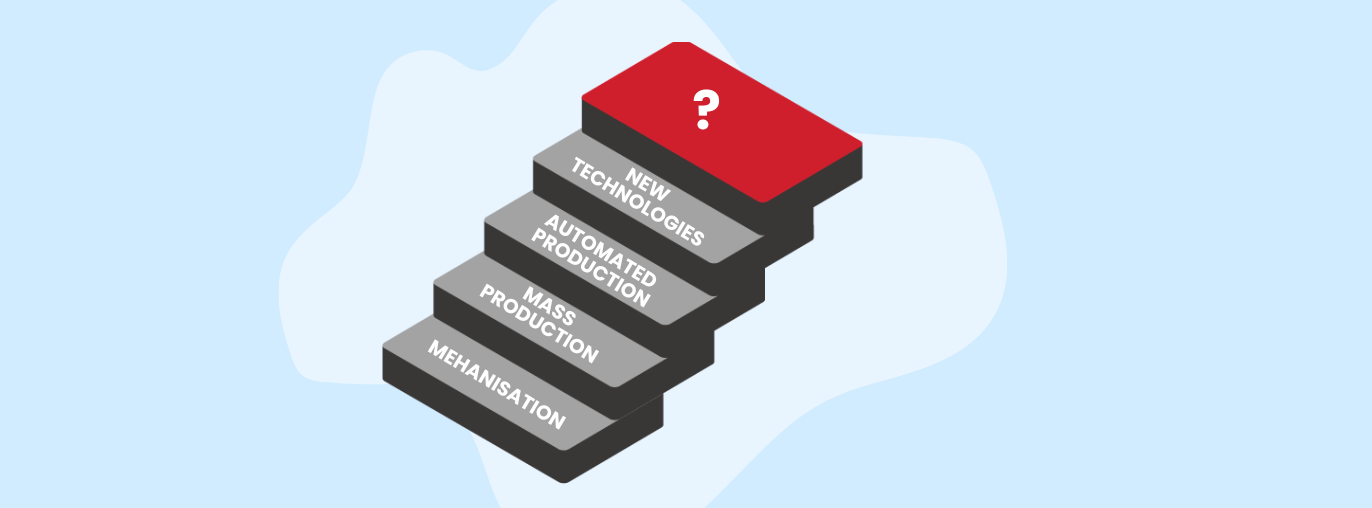

Imagine this: Robots and algorithms are implemented everywhere, and they are taking away job opportunities from humans. It may sound like a plot from a science fiction movie, but it isn’t that far from our reality.

The increasing use of AI-powered tools is already disrupting the job market. According to Goldman Sachs analysts, AI can potentially replace 300 million jobs in the future. Based on their analysis, administrative positions are the most vulnerable, followed by law, architecture, and engineering occupations.

“According to McKinsey & Company, automation may cause a displacement of 400 to 800 million jobs by 2030, resulting in up to 375 million individuals needing to transition to different job categories.”

Although AI positively impacts employee productivity, speed and efficiency, unfortunately, it could also lead to significant job losses and unemployment in several sectors. And particularly in developing countries where labour-intensive jobs are prevalent.

It’s also concerning that the large implementation of AI will most likely affect the most vulnerable populations, such as women, people of colour, and low-skilled workers. This could mean an increase in income inequality, poverty and social exclusion.

However, things aren’t entirely negative. Advocates of AI argue that there is no cause for concern, as we have consistently adapted to new technologies. With the rise of AI, we should expect some new job opportunities – especially in data science, security, and machine learning.

However, we must consider how AI will impact job opportunities and the quality of work.

So, let’s take a moment to consider this.

🔹 Do we know exactly what types of job opportunities are emerging and which ones are being phased out?

🔹 Will individuals who have lost their middle-skilled jobs be able to transition into high-skill roles?

🔹 Do we know how to make this transition less painful?

We should prepare individuals for AI integration through training programs and close the gap between required skills and available training. We should keep identifying new job opportunities and highlighting new skill requirements.

And we should think about implementing regulations in the workplace that will protect employees.

#2 The Hidden Dangers of AI Bias

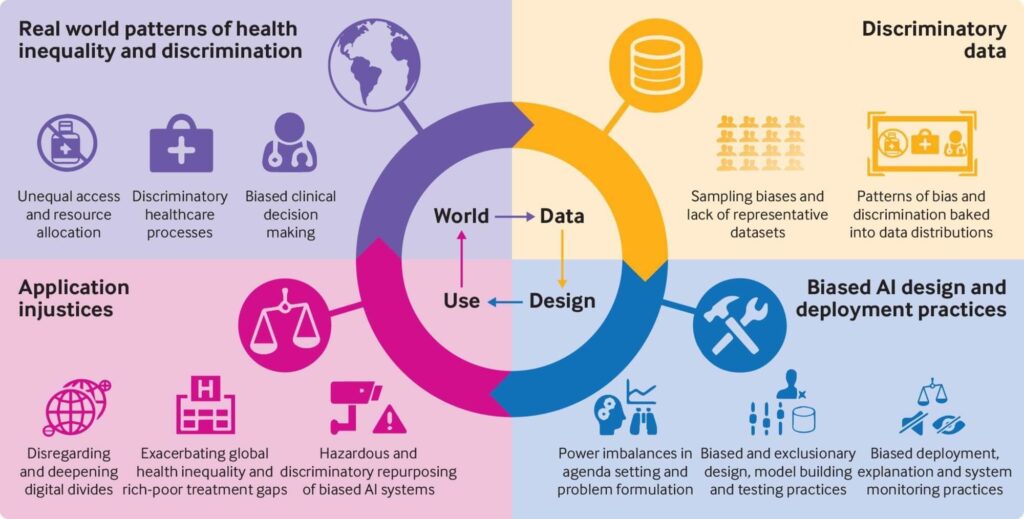

AI bias can arise from biases in the data used to train AI algorithms, leading to unintentional discrimination against certain groups. And this can ultimately lead to discrimination and other negative social consequences, significantly impacting society.

There is a need for diverse and inclusive datasets to avoid bias. Eliminating bias in AI and machine learning requires a thorough understanding of their broader applications.

🔹 But how do you identify potential risks of unfairness and discrimination?

🔹 What if the used data size is insufficient for proper training?

🔹 What if some groups have been overlooked or excluded?

If the data is not thoroughly analysed, the source of the bias will remain unnoticed and unidentified (look at the example above). So, to avoid such issues, it is important to investigate and verify the source and specifics of the dataset. In other words, it’s important to apply analytical techniques and test different models in different environments.

But more importantly, we always have to prioritise good intentions when working with artificial intelligence.

#3 The disappearance of privacy

As we strive for automation, convenience, and productivity, we may have unintentionally sacrificed a crucial aspect: our privacy. The widespread use of AI-powered surveillance, employee monitoring, and data collection has left us open and defenceless.

Some of the privacy and data protection concerns are connected to collecting and using sensitive personal data. As far as we know, as AI transparency is seen as one of a number of key concerns, most AI relies on personal information.

So, how do we lower the risks of unauthorised access and potential data breaches?

There are several tools that organisations can use to protect personal data and restrict unauthorised access or processes. These include data masking and anonymization, encryption, access control and audit tools.

#4 The unreliable nature of AI tools

⚡ Don’t trust AI blindly, as it can mislead you!

AI solutions rely heavily on training data, which, unfortunately, makes them vulnerable to deception. Currently, no AI technology can detect inaccuracies in data without the help of extensive example data that is free of mistruths.

This means that the generated data could often be inaccurate or the data source unknown, which can be very problematic. Although allowing our new AI buddies to make important decisions instead of us may sound tempting, that should not be the case.

AI-generated content may include falsehoods, inaccuracies, misleading information, and fabricated facts, known as “AI hallucinations.”

Imagine that you’re working in a bank institute and using popular generative AI tools to generate lines of code. If you can’t validate outputs from the models and don’t have clarity about the source, you might be jeopardising the entire project (and organisation).

Here are a few important questions to consider.

🔹 Do we truly feel comfortable relying entirely on machines that lack transparency and can behave unpredictably?

🔹 Shouldn’t we at least demand more transparency if we want to keep involving AI in decision-making?

🔹 Are we talking enough about the consequences?

🔹 And how do we protect ourselves from possible consequences?

#5 The truth about AI copyright

If you’ve recently used any Generative AI tools, you must have been impressed by the quality of the output, especially given the speed at which it’s generated. But have you ever wondered how these stunning designs, poems, AI videos, voices and summaries are created?

Although it would be nice to believe it’s all magic – (unfortunately) we all know that isn’t the case. There’s the algorithm and large amount of data behind it.

So naturally, there are some concerns about the AI-generated content’s copyright and intellectual property rights.

“Generative AI is rife with potential AI Ethical and AI Law legal conundrums when it comes to plagiarism and copyright infringement underpinning the prevailing data training practices.”

– Forbes

🔹 Do you know who owns the content produced by generative AI platforms for you or your customers?

🔹 How do you protect that ownership?

🔹 How do we protect human connection and creativity while using AI tools?

From legal issues such as ownership uncertainty and infringement to questions about unlicensed content in training data. These are some of the issues that should be addressed. So, it’s up to legal systems to provide more clarity and define the boundaries that will protect us and our intellectual rights.

💡 But what about the creativity and innovation? Is AI killing creativity or enhancing it?

For example, Generative AI is already changing the nature of content creation, enabling many people to create content they might not have been able to create before or at that quality or high speed.

It’s up to us to define when or not to use those tools and make ethical decisions. As we continue to engage with AI tools, we must consider how our interactions impact human creativity. And we should always leave enough space for it to flourish. As humans, it is our responsibility to protect the most precious element of our lives – our connections with other humans.

Final Thoughts

The long-term consequences of relying heavily on AI for decision-making are still unknown. So, the big question is how do we ensure AI is a tool rather than a replacement? It’s important to consider the potential issues of AI to ensure it’s used responsibly and ethically.

So, what should we do? We should call for organisations to prioritise ethical considerations in AI implementation. To address these concerns, we must insist on ongoing research, regulations, and transparency. And we should emphasise the importance of human-centred approaches in leveraging AI technology. After all, AI’s potential is for augmenting human capabilities rather than replacing them.