by Stephen Mann

Some people might feel uncomfortable about artificial intelligence (AI). They might have grown up watching the Terminator movies and have yet to get past Skynet and the attempted extermination of humanity by machines. Or they might just worry that the technology will adversely affect something that’s currently inherently “human” – either taking something away, or providing an inferior experience or outcome (or both).

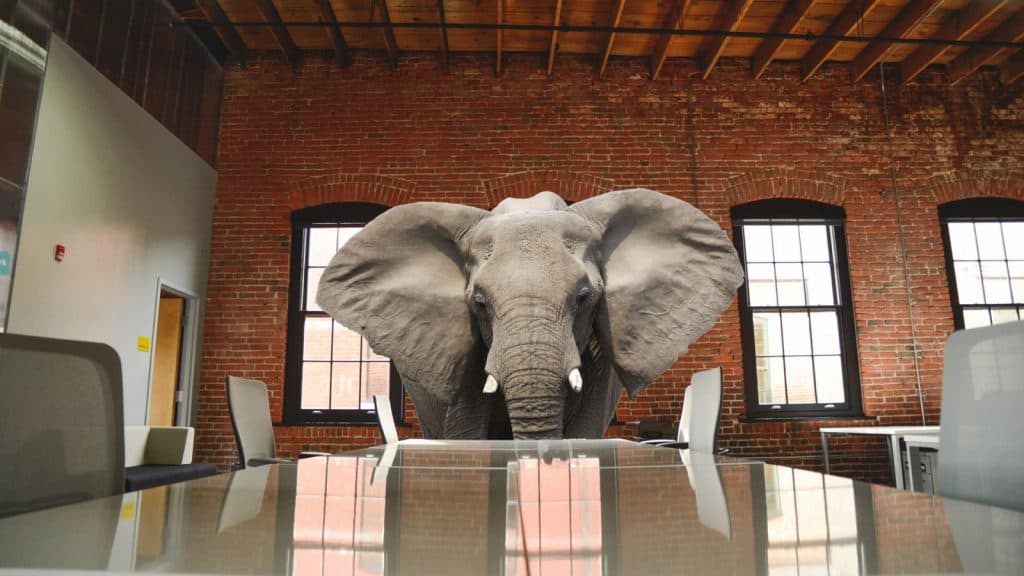

When we look at the potential use of AI by the IT service desk, there’s potentially an elephant in the “AI for IT support” living room. Something that we need to openly talk about. Something that, if left unsaid, will make the adoption of AI-based capabilities more difficult than it needs to be – and potentially suboptimal.

It’s not an elephant, it’s a herd (or, if you’re a smarty-pants, a memory)

There are, in fact, at least three elephants in the room to consider – three elephants to either comfortably seat and engage with, or to politely escort off the premises. The three that immediately spring to mind are:

The service-provider human perspective – “AI will steal our (service desk agent) jobs”

The service-consumer human perspective – “People want to be helped by other people not technology”

The technology versus human perspective – “Technology will never be able to understand situations and needs like other humans”

And, interestingly, the first two can both be considered in the context of another service desk technology/capability – self-service.

1. Will AI steal service desk agent jobs?

Consider this question in terms of AI versus self-service. Not as an either-or, as there are overlaps. Instead, consider the use of AI in terms of what the IT industry has seen and done to date with self-service technology. It’s something that’s more of a “known” for service desks, and thus easier to talk about.

Was there a big hoopla over self-service stealing service desk agent jobs? No. In fact, IT self-service has spent most of the last decade being viewed as something of a savior for overworked, and under pressure, IT service desks. And if you consider AI’s value proposition for IT support organizations, for instance that:

Issues can be deflected away from the service desk, taking some of the pressure off agents (plus that service desk agents can be freed up to spend time on more valuable activities)

End-user, or customer, needs can be met more quickly, with a better customer experience

Operational costs can be reduced or budgets reprioritized to deliver additional services

There’s greater insight into issues, opportunities, and operational performance.

Then you could easily be mistaken in thinking that these are the positives that self-service can bring.

So why the fuss over service desk job losses with AI but not with self-service? The real answer is that there isn’t one outside the media buzz over AI taking jobs wholesale – that many support people see AI as a positive not a negative thing. Both Service Desk Institute (SDI) and ITSM.tools research shows this, as does the ad-hoc canvassing of session attendees at events – with AI’s potential to help support agents outweighing its potential to harm service desk employment opportunities.

2. Do people prefer to be helped by people?

Again, let’s use self-service as a mirror for the AI opportunities, for instance the use of chatbots and virtual personal assistants. It particularly works if you consider chatbots to be an evolution of self-service more than of chat.

To date, self-service – while highly adopted from a technology point of view – has had limited end-user adoption success. Resulting in only few organizations realizing the expected return on investment (ROI):

“The number of organizations that have realized these benefits and have achieved the anticipated ROI are few, less than 12% according to recent SDI research.”

But who can blame people for avoiding badly-delivered IT self-service capabilities that are deemed to make life harder not easier? And this is a learning opportunity for AI too – people need to:

a. Understand their options and why some options are better than others (for them, not just the organization)

b. Be able to easily find, access, and use their chosen help/service option

c. See that the chosen option is easier, and more beneficial, to use than what they have previously defaulted to, e.g. the telephone

d. Have choices that cater for different scenarios.

People will ultimately choose what’s easiest for them in different scenarios. If the non-human choices offer an inferior service experience, then of course people will continue to choose the human option(s). Just look to the variety of available options, and the choices made, in the consumer world – how do you contact Amazon for help? Self-help? Email? Chat? Or telephone call? It’s ultimately what works best for you, for a given need and circumstance, and it may or may not involve a human going forward.

The bottom line is that people will prefer to be helped by humans until they receive a better service experience from something without third-party human involvement. Whether it be self-service or a chatbot. The important thing is that people will stick with what they know until they are educated about, and convinced of, a better way of doing things.

3. Will technology be able to comprehend and engage as well as humans?

Let’s start with “as well as humans” part of this. It somewhat assumes that all humans are able to, and consistently do, provide a similar (great) service experience. That all people have similar knowledge, skills, and experiences plus the right attitude and understanding of how customers want to be treated. For instance, appreciating the customer’s situation and being empathetic when needed.

Then add in that people get tired, might be affected by work or personal-life “situations,” or just have “an off day.” People aren’t robots, and the service experience offered and received will potentially differ across teams, i.e. people, and even times of the day, week, or month (for individuals).

Humans are also inherently prejudiced – we prejudge, i.e. have preconceived opinions that are not necessarily based on fact. It’s where we generalize, compartmentalize, and potentially make the wrong assumptions. Sometimes such “mental shortcuts” can be beneficial, but they can also do harm either to the customer or the situation at hand. For instance, that a printer issue is always unimportant – it is if the printer is a critical part of business operations.

Technology, on the other hand, will be consistent and works with facts not prejudices (although it’s perfectly possible that human prejudice can influence some of the source “knowledge” used by AI). And it’s consistent in a way that can be tweaked to continuously improve upon past performance, with AI having the ability to self-learn. And not just the mechanics – the “put tab A in slot B” – of servicing support needs, but also understanding sentiment, i.e. how the customer is feeling about the current interaction. Adjusting accordingly or escalating the interaction-at-hand to a human if needed (and hopefully before it’s really needed).

So, is human-based support really the most-loved option for customers? It will be, but only until the technology can do better. As with self-service, there’s a tipping point that needs to be passed – where the technology is easier and quicker to use, and with an acceptable experience. Ultimately, it doesn’t have to replicate the humanness of other channels to become the preferred method for receiving service and support – it has to better meet the full gamut of customer needs.

Do you agree or disagree with these? What other elephants in the room do you see with AI? Please let me know in the comments section.